Red Teaming Techniques That Reflect How Real Adversaries Operate

Security teams often talk about exposure. Fewer are willing to test it properly. Red teaming techniques exist to close that gap. Most organisations run vulnerability scans. Many commission annual penetration tests. Both have value. Neither fully answers a harder question: how would a determined attacker move through the business without being noticed?

Red teaming is not a louder penetration test. It is a controlled simulation of a genuine threat. It focuses on behaviour, timing, access paths and human reaction. The aim is not to list weaknesses. It is to observe how detection and response actually perform under pressure.

Recent breaches have shown why this matters. The compromise of SolarWinds did not hinge on a single missing patch. It exposed weaknesses in trust relationships and monitoring discipline. The disruption at Colonial Pipeline demonstrated how quickly operational impact follows identity compromise. Both incidents reflected layered failure and not just one technical flaw.

Red teaming techniques are designed around that reality.

What Red Teaming Really Tests

A mature red team engagement examines three things at once.

- First, the technical control surface. Network segmentation, endpoint detection, identity management, cloud configuration. All must hold up under deliberate evasion.

- Second, the people. How quickly does someone escalate a suspicious login? Does anyone question unusual data movement? Are alerts investigate or simply closed?

- Third, the processes that connect everything together. Incident response plans often look solid on paper. Real testing shows where communication breaks down or where responsibility becomes unclear.

Unlike routine testing, red teaming operates over time. Attack paths are develope gradually. Sometimes nothing happens for days. That silence itself becomes part of the test.

The most effective red teaming techniques mirror how threat actors think, not how compliance frameworks are structure.

Intelligence-Led Reconnaissance

Every serious adversary begins with reconnaissance. Red teams do the same, although within defined scope and approval.

Public information provides a starting point. Staff roles on professional networking sites reveal technology stacks and security vendors. Domain records and exposed services fill in more detail.

What matters is context. An exposed VPN portal alone means little. Combined with visible password reuse from previous breaches, it becomes a viable entry route.

Reconnaissance also explores behavioural patterns. When are IT changes typically deploye? Which teams respond fastest? Subtle observation often yields more access than noisy scanning.

It is worth noting how structured threat modelling has influenced modern red team practice. Frameworks like MITRE ATT&CK provide mapped adversary behaviours. Red teams use them as reference, not scripts. Real attackers rarely follow clean matrices.

Initial Access and Foothold Establishment

Once reconnaissance identifies opportunity, access must be gained without triggering immediate detection.

Phishing remains effective, not because users are careless but because business communication is fast and repetitive. Carefully crafted pretexts that align with current projects stand a higher chance of success.

Credential attacks continue to feature heavily in red teaming techniques. Password spraying, token theft and abuse of legacy authentication protocols are often quieter than exploiting a software vulnerability.

In cloud environments, misconfigured storage or excessive permissions frequently provide the first foothold. Access does not need to be dramatic. A single low privilege account can be enough.

What distinguishes red teaming from opportunistic hacking is restraint. Actions are measured. Detection thresholds are tested gradually. Noise is avoided deliberately.

Privilege Escalation and Lateral Movement

Gaining access is rarely the end goal. The more revealing phase begins once the red team is inside.

Modern enterprise environments rely heavily on identity. Directory services, federated authentication, cloud synchronisation. Compromise one component and the ripple effect can be significant.

Red teaming techniques frequently focus on:

- Abuse of delegated permissions

- Kerberos ticket manipulation

- Misuse of service accounts

- Exploitation of over-permissioned cloud roles

None of these rely on zero-day exploits. They rely on configuration drift and complexity.

Lateral movement is usually slow. Red teams may use built in administrative tools to avoid detection. Living off the land techniques blend into routine activity. Endpoint detection platforms such as those from Microsoft and other major vendors are capable, but they depend on correct tuning and active monitoring.

In many engagements, escalation succeeds not because defences are absent, but because alert fatigue has dulled sensitivity.

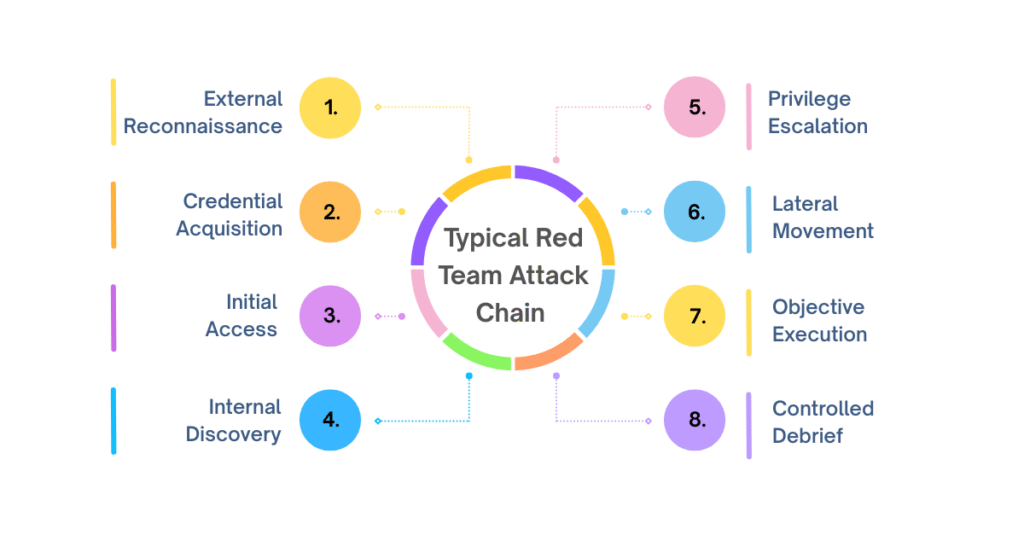

A Typical Red Team Attack Chain

The flow below illustrates how controlled adversary simulation often unfolds.

- External reconnaissance

- Credential acquisition

- Initial access

- Internal discovery

- Privilege escalation

- Lateral movement

- Objective execution

- Controlled reporting and debrief

Each stage feeds the next. The aim is not to rush to step eight. The real value lies in how defenders respond at each earlier point.

Command and Control Evasion

Red teams must maintain access long enough to observe defensive reaction. This requires discreet command and control methods.

Encrypted outbound traffic is common in business environments. Red teaming techniques exploit that normality. Beaconing patterns are tuned to avoid simple anomaly detection. Traffic may be routed through reputable cloud services to blend with legitimate use.

Detection engineering becomes central at this stage. Security teams often rely on signature-based tools. Red team activity exposes where behavioural monitoring is weak or where telemetry is incomplete.

Short dwell time is sometimes a red flag in itself. Experienced attackers are patient. Red teams emulate that patience.

Objective Based Testing

Not every engagement aims to extract data. Objectives are agreed in advance and vary according to organisational risk.

Some tests focus on financial fraud scenarios. Others simulate ransomware deployment but stop short of encryption. In regulated sectors, red teams may attempt to access sensitive records to test monitoring controls.

The objective must matter to the business. Testing without meaningful impact reduces the exercise to theatre.

Careful scoping prevents operational harm. Production systems remain protected. Yet realism is preserved. That balance defines professional red teaming.

The Human Factor

Technology attracts attention, but human behaviour often determines outcome.

Social engineering remains a core component of many red teaming techniques. Phone based pretexting, physical access attempts, badge cloning. These methods feel uncomfortable because they expose cultural weaknesses rather than technical ones.

Employees rarely act maliciously. They act under pressure. A convincing call from someone claiming to be a senior manager can bypass established procedures in minutes.

Organisations that treat such findings as learning opportunities improve faster than those that look for blame.

Reporting That Drives Change

The final stage of any red team exercise involves structured reporting. This should not read like a vulnerability list.

Effective reports tell a story. They describe how access was achieved, how movement occurred, and which controls failed to intervene. Clear timelines help leadership grasp impact.

Crucially, reporting should connect findings to business risk. A domain admin compromise means little without context. When framed as potential operational shutdown or regulatory exposure, urgency becomes clearer.

Good red team output strengthens blue teams rather than undermining them. Collaboration after the exercise often yields the greatest long-term benefit.

Where Red Teaming Sits Within Security Strategy

Red teaming techniques do not replace routine security work. Patch management, vulnerability scanning, security awareness training and logging remain essential.

Red teaming validates whether these investments function together. It tests integration and response maturity.

Mature organisations run red team exercises periodically, often alongside purple team sessions where offensive and defensive teams collaborate in real time. This mix sharpens detection capability quickly.

There is a quiet discipline to effective red teaming. It avoids spectacle. It measures reaction rather than ego.

Conclusion

Red teaming techniques expose how an organisation truly responds under simulated threat. They uncover gaps that compliance audits miss and reveal whether monitoring and incident response operate as intended.

The process demands careful planning, experienced operators and leadership buy in. It also requires honesty. Findings can be uncomfortable, particularly when weaknesses stem from process or culture rather than technology.

CyberNX is a well-known cybersecurity firm that provides highly efficient red teaming services. Their professionals use methods like role-based social engineering, application and network penetration testing, and client-side attacks to find weak spots in your important assets. Their red teaming services offer more than just basic security checks; they also include full testing to make your company’s defences stronger. Security posture is not defined by the number of tools deployed. It is defined by how the organisation reacts when those tools are tested. Red teaming provides that clarity.

Disclaimer

The content presented in this article is for informational purposes only and is not intended as professional cybersecurity, legal, or compliance advice. Red teaming activities involve controlled simulations that should only be conducted by qualified professionals within clearly defined legal and organisational boundaries. Unauthorised testing or misuse of the techniques discussed may violate laws, regulations, or organisational policies. Readers are strongly encouraged to consult certified cybersecurity experts and obtain proper approvals before implementing any red teaming strategies. The author and publisher assume no responsibility for any actions taken based on this information.